The Evolution of Search: Past, present & future.

An insight into search engines and their working, algorithm changes and what lies for search ahead in the future

In the previous issue of this newsletter, I talked about how you can make the most of your google search. It got me into thinking about the bigger picture that has made everything available at our fingertips just one search away: the evolution of web search in the past three decades.

We have come a long way from browsing books and encyclopaedias to search engines. Search engines while on one hand have made our lives easier, on the other, they have also changed the way we interact with information and absorb it.

In this piece on Time, author James Cordata says that we have now developed an index-based approach when browsing information, similar to looking for keywords at the end of a book and going to the specific pages instead of going to the table of contents and then following up on the information. The latter offers information in a much more structured way, and we end up knowing a lot more than what we were actually looking for. He gave the following example:

“Imagine you want to know what year John F. Kennedy was elected President. If you had an encyclopedia, you would look at the entry for JFK and find out the answer (1960), but you’d also have to skim past JFK’s birth date and birthplace, and probably lots more. If you had a smartphone and Google, on the other hand, you would specifically look up the year he was elected, and the year is right there at the top. The more we use services like Google, the more our brains organize the world in an index-based fashion. This also means people who make a living providing information are increasingly organizing their presentation to catch eyeballs looking for specific details in indexes”

I guess we can agree to the fact that we now look at the world in an index-based approach. It’s quite interesting to look into how we have come here and what lies ahead for search engines in the future.

Outline:

> How Search Engines Work?

> The Early Players

> The Dominance of Google

> Mobile Search

> The Future of Search

How Search Engines Work?

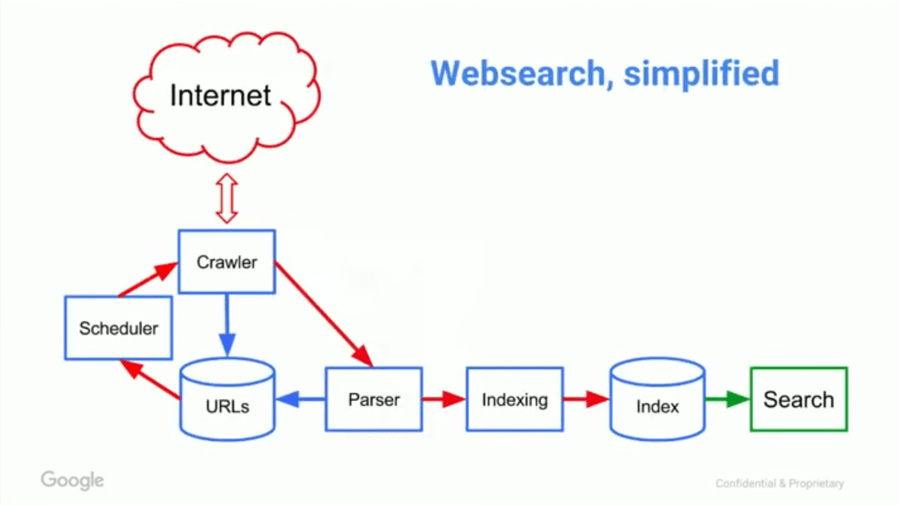

The first step in understanding the evolution of search is to understand how search engines work. The core idea behind any search engine is information retrieval based on a user’s query. The three primary ways in which any search engine accomplishes this are crawling, indexing and ranking.

All this work is done round the clock by search engines so that whenever a user comes up with a query, they will have the most relevant results to display. Let us look closely at these three interlinked steps:

1. Crawling

Search engines employ robots that literally crawl over the web scouring for new pages/content and information, these robots are often called ‘spiders’. You can think of this as NASA’s satellites and probes that are sent into space to discover new galaxies, star systems, etc.

Crawlers will look for different content, be it an image, a video or a PDF file that are discoverable via links, which are collected for the next step.

These bots will follow the links on the page and go to new webpages to collect the URLs, thus building a map of interlinked pages. These bots also re-crawl webpages to look for new content from time to time based on a scheduler.

2. Indexing

The next step involves the storage and organization of the information collected by crawlers. In between, parsers extract data from these links and send them for indexing based on keywords. You can think of an index as a huge digital library with truckloads of catalogued data stored. The index contains the data that is served to users based on the keywords they search for.

3. Ranking

The third and the most vital part from a user’s viewpoint is the ranking of webpages and information i.e. the order in which the results are displayed on search engine result pages (SERP).

A search engine ranks the results from most relevant to less relevant so that any user’s query is satisfactorily answered within a fraction of seconds. This quick ranking is accomplished via a series of search algorithms that are unique for every search engine. Algorithms analyze content based on several factors like: location, back-links, freshness (how new the content is), readability, etc. If you are interested in the working of search algorithms, check this piece by Google.

Now, that we have a basic idea of what mechanisms work in the background, it will be easier to analyze what the initial search engines did and how Google captured the search engine market, eventually shaping the evolution of web search as we know today.

The Early Players

Internet owes its origin to the development of ARPANET in 1969, but search engines came almost two decades later in the 90s. Prior to this people only shared website links and names directly and through word to mouth publicity. To put things into perspective better, I’ll use the timeline approach:

1990: The first search engine, Archie appeared. Archie indexed public files available for download from sites that used FTP (file transfer protocol). Due to limited space, they did not index the content for sites and only provided their directory listings.

1991: With the rise of Gopher protocol, two new search engines emerge: Veronica and Jughead (which are characters from the comic series, Archies!), which index file names and titles stored in Gopher index systems.

1992: Virtual Library (VLib) set up by Tim Berners Lee at CERN to host a list of web servers in the initial days of the web.

1993: The first known web crawler, World Wide Web Wanderer, was developed, that measured the growth of the internet and stored it in the Wandex database.

The first web-based search engines: W3Catalog and Aliweb are released. They did not use web crawlers and were dependent on a list of websites.

By the end of the year, the first web search engine to use crawling and indexing to display search results, JumpStation was released.

1994: Yahoo is born! Created by David Filo and Jerry Yang, it became the first popular web directory. It relied on humans to provide short descriptions for each URL and organize them into categories.

Webcrawler is released, becoming the first search engine to index entire webpages i.e. users could now search for any word on any webpage and not just site URLs. It was so popular that it could not be accessed during the day.

Lycos is released with a system to categorize sites on relevance and matching keywords with prefixes and word proximity.

Apart from Lycos, two other web search engines were launched: Infoseek and World-Wide Web Worm.

1995: Looksmart, a rival web directory to Yahoo is released.

Yahoo launches Yahoo Search to allow users to search the Yahoo directory, becoming the first popular search engine. However, it did not use web crawlers.

Altavista, the first search engine to allow natural language queries, is released. Apart from being the first to have unlimited bandwidth, it had advanced search features and allowed users to add and delete their own URLs within 24 hours.

1996: Robin Li develops the RankDex algorithm that used hyperlinks to measure the quality of web pages, eventually developing into the Baidu search engine.

Larry Page and Sergey Brin start working on BackRub, Google’s predecessor that utilized backlinks for search i.e. ranked pages based on the number of citations or the number of times they were mentioned on other webpages.

Inktomi releases the Hotbot search engine.

1997: Ask Jeeves, a natural language based web search engine that ranked sites based on their popularity is launched. Becomes ask.com later.

1998: Google is launched. Makes its search available for the public.

MSN launches a search portal called MSN search using results from Inktomi and LookSmart.

1999: AlltheWeb with more advanced search features and a fresh database was launched.

2000: Baidu is launched by Robin Li.

2002: Yahoo buys Inktomi and Overture which had bought AltaVista and AlltheWeb.

2003: Yahoo starts using its own web crawler for Yahoo search.

2004: Microsoft starts using its own crawler and indexer for MSN search.

The subsequent years saw acquisitions of smaller search engines by bigger players to widen their reach.

It is easy to see how in the early days, the first two steps - crawling and indexing played a major role.

This meant that these sites would rank the results on the basis of keywords only. For example, if I’m searching for a cafe, a website that mentions cafe more than any other website would be ranked at the top. This made them prone to spamming and decreased the relevance of their results.

The Dominance of Google

Google, instead of the keyword ranking approach, came up with its citation-based ranking system. A site that had more links pointing to it was placed higher on the SERP. You can make an analogy of these links with votes to put things into pictures better. The founders believed that a site that has been cited multiple times holds more credibility and trustworthiness, and was thus more relevant.

Due to this unique ranking method, Google’s search results gained more popularity over the others. Over the past two decades, Google has continuously aimed for making the search experience smoother, faster, and as efficient as it can be via a series of changes both visible on the front-end as well as in the back-end algorithms. At Google, change has been the only constant.

Feature Changes

On the front-end that is SERPs, Google has integrated a series of feature updates to return information upon a user’s query. A timeline of these changes can be seen in the image below:

AdWords

Google introduced AdWords back in 2000, prior to which it was free of ads. Ads were the first feature change to appear on SERPs. Since their start, Google has stuck to its motto: ‘We only sell ads, not search results’.

Google makes money via these ads but they separate the organic search results from ads, the advertisements will always have ‘Ad’ written in bold on top of them, while at the same time ensuring that only ads relevant to the search query are displayed. The placement of these ads is usually at the top of the results.

Universal Search

Google soon expanded from the web to categorize information vertically on the basis of content type, and called it ‘universal search’. Images came in 2001, followed by news, maps, and videos later in 2006.

The introduction of this vertical search was a step forward in making results more relevant to a user. For example, a user search query ‘ Pixel unboxing’ will automatically lead to a series of video links instead of other web links. Further, users interested in looking for specific types of information could now directly click on the relevant tab and browse in there.

In this period, Google also worked on features like: ‘Did you mean’ that would suggest the correct phrase when you mistype something and display results for the corrected words automatically. Features like Google Suggest made real-time predictions for search terms, completing your search query even before you type it and providing those options in the search dropdown.

Featured Snippets

These are small previews seen in the topmost part of SERPs that aim to quickly answer any user’s query without them having to click on any website. I talked about these features at length in the previous issue, you can check movie times, weather, ask for a celebrity’s height, and a lot more.

Knowledge Graph

Have you seen that little infobox on the right side of the SERP when you search for a famous person or a disease? That’s Knowledge Graph for you. Launched in 2012 as a way to significantly enhance the value of information returned by the search, Knowledge Graph uses a detailed and structured format to answer the query on SERP itself.

Carousel

The Knowledge Graph is often accompanied by the Carousel too. The carousel is simply a row of visual results that are similar/related to your search query, you can think of them as recommendations by Google. For example: If I search for Madonna, at the bottom of Knowledge Graph, Google will show ‘People also search for’ which upon clicking opens a carousel of personalities related to Madonna or other famous singers/actors. The Carousel becomes especially helpful when you are looking for local sights and places to visit when in a new city.

Algorithms

Much of the talk has now been on the front-end, so we come back to the back-end part i.e. algorithms. It’s amazing how Google’s search results end up giving you exactly what you were looking for, cementing its place at the top. This has been achieved through a series of changes and updates to the basic algorithm.

While Google claims to make more than a thousand changes every year, which are too minute to be noticed, I’ll talk about some of the biggest updates that have had a major impact in improving a user’s search experience.

Good Link vs. Bad Link

As early as 2003, Google was busy determining the nature of links that linked to a site to improve the relevancy of their search results. Remember, the vote analogy? Just like votes, these links to a site could also be manipulated. Google identified this problem and began cracking down on sites that exploited back-links to rank higher using multiple sites created just for the sake of increasing their citations.

Panda

The Panda algorithm went live in 2011 to crack down on content farms i.e. sites that used a lot of keywords to make them rich but ultimately served useless content. Google began demoting this thin-content, duplicate/plagiarized, and ad-heavy pages that ruined user experience to promote better content. This update, now integrated with the core is also called the Farmer update as it cuts through a lot of junk to make way for the best content out there.

Penguin

One of the most brutal algorithms employed by Google, Penguin update came in 2012 to tackle the problem of web spam, namely bad links or keyword stuffing. Sites using tactics such as buying links from link farms to improve their search rankings or linking to unrelated topics were penalized by Google by pushing them lower and lower in their SERPs for months/years. Moreover, Google could also de-index these sites making them invisible from their search completely.

Hummingbird

The first algorithm to analyze the intent behind a user’s search query, Hummingbird was launched in 2013. Using natural language processing, Hummingbird helped Google in interpreting the search results better by looking out for similar words/synonyms. This was in contrast to the keyword approach in the early days of a search engine, now a SERP could contain links that wouldn’t contain your keyword at all!

Pigeon

Launched in 2014, the Pigeon update aimed to blur the gap between web search and map search. This was a key update for local searches, modifying the way Google interpreted location cues. The update delivered more relevant and accurate local search results.

Mobilegeddon

A fancy term for Mobile update launched in 2015, the Mobilegeddon preferred sites that display well on mobile platforms such as smartphones and tablets. Though the search results are not affected, sites having a more user-friendly mobile website are pushed higher in the search rankings.

RankBrain

With the launch of RankBrain as a part of Hummingbird in 2015, Google revealed that machine learning has been playing an integral role in its ranking algorithms to understand the user intent and suggesting relevant results. The scope of the search is expanded to include similar terms, or implied words that the user might have missed, as well as give results on the basis of the user's personal search history. For example, if you keep visiting certain sites regularly, you’ll see those pages ranking higher in your search results.

Possum

The next big update to local searches after Pigeon, Possum was released in 2016 giving more preference to the actual location of the user. Depending on their location, two users will get different search results. The update diversified local searches as well as penalized spam sites.

Core Updates

Starting 2017, Google has been working on core updates are supposed to be minor improvements over the previous ones. These are a lot less transparent and no one knows exaclty what they do. For instance, the unofficial Fred update came in 2017 impacting the search ranking of multiple pages. It was later found that the updates cracked on content websites that prioritized revenue generation over content and thus ruined user experience. The effects of these updates are analyzed only post-release and there’s no conclusive result. These updates could a be minor improvements over the previous ones.

BERT

The latest major algorithm update since RankBrain, BERT was launched in 2019 to better understand natural language. Based on neural networks. BERT stands for Bidirectional Encoder Representations from Transformers and aims to better understand search queries and interpret texts in terms of context to indentify the user’s intent as closely as possible. The update also impacted featured snippets, returning more accurate information than before. You can read about it and see the changes here.

Mobile Search

The smartphone revolution meant a great deal for web search. Because, now there was a completely new dimension to web search that was literally at your fingertips for most part of the day.

The ever increasing dependence on smartphones meant that the way we interact with information also changed. Google had realized the potential for smartphones way back in 2008, and its efforts were directed towards Android development. Today, Android runs on more than 80% of smartphones worldwide.

2016 on wards, mobile searches have been accounting for more than 50% of the search made on Google. While Google’s web search has evolved over the years, making it more consistent, precise and reliable, the company is putting in its best efforts to offer the same on mobile platforms in terms of usability and accessibility. Here, the featured snippets and knowledge graph have been especially useful in providing relevant information as quickly as possible.

Location-based searches

Google could now make use of the in-built GPS in mobile devices to integrate local/location-specific features in search results. Upon allowing location access to the Google app, it becomes easier to find almost any service near you as well as get directions to them via Google Maps integration.

Voice Search

The way technology strives to have a human element in our interaction with it is endearing. With personal assistants like Alexa, Siri, and Cortana, voice searches and interactivity with technology have been able to set a conversational tone. The fact that “Ok Google” transforms your device to a rather very fetching pal that shall scour the world wide web for whatever you ask for, is undoubtedly special. Save for the times when they don’t quite understand what we have asked for, voice searches have become the way ahead with around 20% of mobile phone users choosing to talk to their phones than typing.

Widely considered as the future of search, voice search will eliminate the need of SERPs completely, with information exchange taking place directly between the AI assistant and user. The in-built microphone and voice recognition software together make the experience of voice search on mobiles seamless.

Accelerated Mobile Pages

Have you noticed a tiny bolt icon next to the title on mobile SERPs? The bolt stands for Accelerated Mobile Pages (AMPs) i.e sites with faster content loading time. This is one of the ways by which Google enhances mobile performance by loading streamlined content that is lighter. AMPs are said to be the future for mobile web pages given their super quick loading times, and are likely to rank higher in the years to come.

The Future of Search

As mentioned before, the future will belong to voice and Artificial Intelligence. With the smartphone market expanding day by day, major shifts are expected to happen in that direction. Both these features combined will make the search engines highly assistive and predictive in nature to streamline the user experience.

In the previous sections, I talked about RankBrain and BERT algorithms that are powered by machine learning and neural networks to better understand the intent behind a search. Taking data points such as location, browsing history and other search queries, the search engines are now able to give more personalized results (some of which can be downright creepy, given their accuracy).

AI and automation in general is the future, and we have now only begun to realize its true potential. Technologies such as Google Lens have already transformed the field of visual search. In the future, simply pointing your towards an object should end up in all results related to it. For instance, you point your phone camera towards a movie poster and you get the nearest theatres running the movie and its show timings, or you point towards a food dish and you will be directed to restaurants that serve it.

Similar to visual search, AI is also expected to have huge implications for the e-commerce industry. With AI, automatic content and catalog creation is a possibility, helping marketers to place their product better. Amazon has become the go-to place for a large number of people when it comes to online shopping. People can search for products directly on Amazon and categorize their results based on price, features, reviews, etc. The in-built search function eliminates the need of any third party search engine.

This trend in general features towards the rise of vertical search i.e. searching within a specific category or on a website. For example, if I need to browse what’s happening around the world, I can search for the latest news stories and trends on Twitter directly without asking Google. Similarly, sites such as Skyscanner will get me the list and schedules of flights to help me plan my trip. Or, I’ll directly go to Zomato to browse the nearest restaurants near me. The increased efficiency of self-contained search engines in mainly e-commerce services is likely to bypass Google’s role as a connector.

Grasping the vein of search trends in the future is essential to digital marketers and developers to increase the visibility of their products. It would be interesting to see how Google itself adapts and integrates these changes, given the giant has been continuously striving for the better. One thing that we know for sure is that the era of search bars is coming to an end.

Would love to get your thoughts on this! Lmk what you’re most curious about in comments. I'm looking forward to writing more over the weekends, do let me know what you think! I’ll be writing on Remote work, startups, and some other topics I’m curious about in tech. 🙏